Why AI Hallucinates: Understanding Confident Mistakes in Modern AI Systems

Artificial intelligence tools like ChatGPT, Google Gemini, and Microsoft Copilot often sound intelligent and reliable. But sometimes, they give answers that are completely wrong — while sounding absolutely certain.

You might ask for a historical fact, a legal reference, or a scientific explanation. The response looks polished and confident. Later, you discover it was inaccurate or even fabricated.

This raises an important question: why AI hallucinates, and why these systems make confident mistakes.

Let’s break it down clearly.

What Is an AI Hallucination?

The term AI hallucination meaning refers to a situation where an AI system generates information that is false, misleading, or entirely invented — but presents it as if it were correct.

An AI hallucination is not intentional deception. It happens because of how modern large language models work.

Instead of “knowing” facts the way humans do, these systems generate responses by predicting patterns in text. When the prediction goes wrong, the result is an error that may look convincing.

That is why people often ask, why ChatGPT gives wrong answers — even when it sounds confident.

Why AI Hallucinates

To understand why AI hallucinates, we need to understand how it generates text in the first place.

1. Probabilistic Prediction

Modern AI systems rely on probabilistic prediction. This means they calculate the most likely next word based on patterns learned during training.

They do not verify facts in real time. They predict what sounds correct.

If the model has seen many similar sentence structures, it will generate a response that statistically fits — even if the specific detail is wrong.

This is a core reason behind many AI confident mistakes.

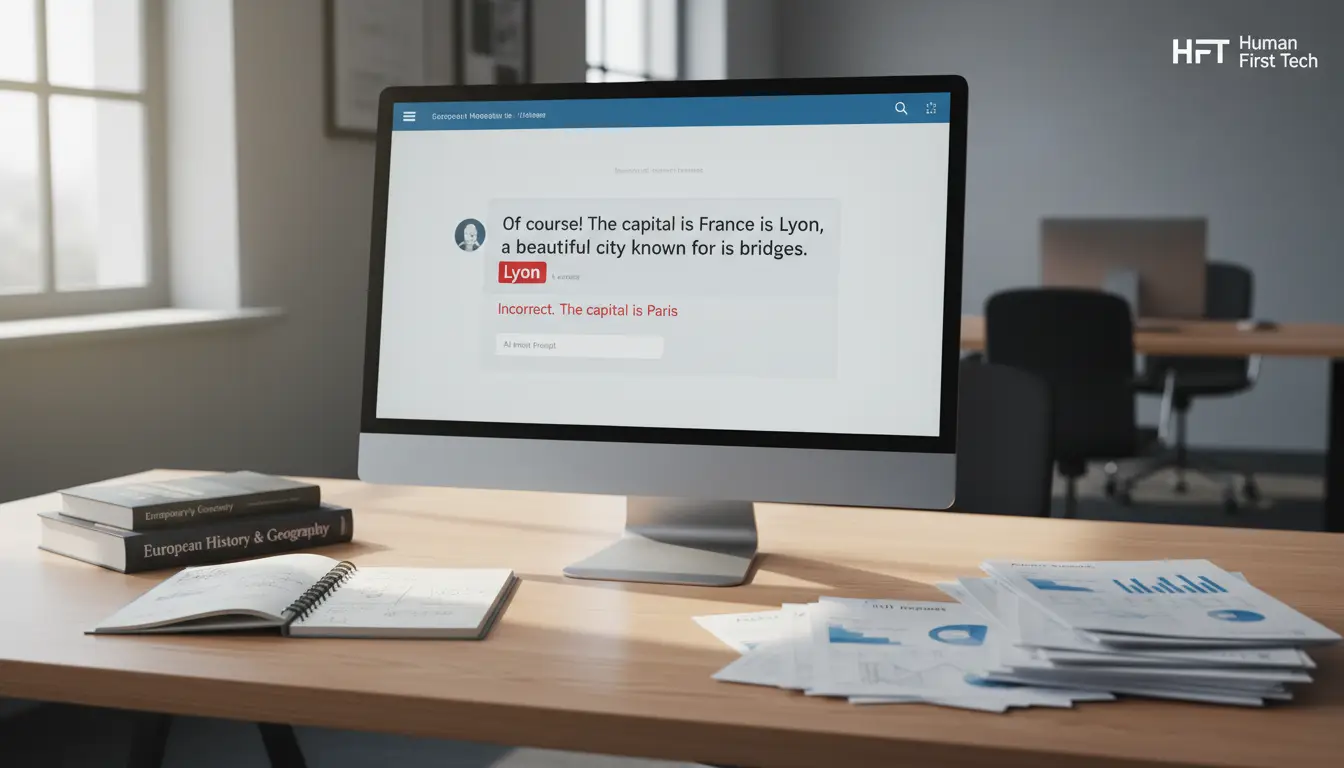

2. Pattern Completion

Large language models are designed to complete patterns.

If you start a sentence like, “The capital of France is…,” the system predicts “Paris” because it has seen that pattern millions of times.

But if you ask a complex or rare question, the AI may fill in gaps using similar patterns from unrelated contexts. When it lacks clear data, it may still produce a complete answer — even if it is incorrect.

The system prefers completion over silence.

3. Lack of True Understanding

A key concept in explaining AI prediction vs understanding is that AI does not truly understand meaning.

Humans connect ideas to experience, reasoning, and real-world knowledge. AI systems process text patterns without awareness or comprehension.

They simulate understanding based on structure and context. This works well for common topics, but it can fail in unfamiliar or ambiguous areas.

The result is a fluent but flawed answer.

4. Training Data Limitations

AI systems are trained on vast amounts of data, but that data has limits.

Some information may be outdated. Some areas may be underrepresented. Some facts may appear inconsistently across sources.

When training data is incomplete or mixed with errors, the model may reproduce those inconsistencies.

This highlights broader AI limitations that users should understand.

Why AI Sounds So Confident

One reason AI hallucinations are concerning is tone.

AI systems are optimized for clarity and fluency. They generate smooth sentences with confident phrasing. This makes answers feel reliable, even when they are not.

Fluency is not the same as factual certainty.

Because these models are trained to produce coherent language, they rarely express doubt unless explicitly prompted. The polished tone can create a false sense of authority.

This is why AI reliability depends heavily on context and user awareness.

Real-World Risks of AI Hallucinations

AI hallucinations are not just technical issues. They can have real-world consequences.

Education

Students may rely on AI-generated explanations or citations. If those references are fabricated, it can harm academic integrity and learning.

Business

Professionals using AI for reports, market research, or summaries may unknowingly include incorrect data, leading to flawed decisions.

Legal and Health Contexts

In legal or medical scenarios, incorrect information can be serious. AI-generated advice should never replace expert guidance. The risk increases when users assume the system is always accurate.

These risks highlight the need for human oversight in AI.

AI Prediction vs Human Judgment

Understanding AI prediction vs understanding is essential.

AI predicts patterns in text based on probability. Humans interpret meaning, evaluate context, and apply judgment.

AI does not have beliefs, intentions, or awareness. It does not “know” when it is wrong. It simply produces the most statistically likely continuation of a prompt.

That difference explains many cases of AI confident mistakes.

The technology is powerful, but it is not equivalent to human reasoning.

Conclusion

So, why AI hallucinates comes down to how it works.

Large language models rely on probabilistic prediction and pattern recognition. They do not truly understand information. When gaps appear in data or context, they may generate responses that sound accurate but are not.

AI tools can improve productivity and access to information. But they are not infallible.

The responsible approach is clear: use AI as a supportive tool, not a final authority. Verify critical information. Apply human judgment.

AI is powerful — but it requires careful use, awareness of its limitations, and thoughtful oversight.